Spark的算子分为两类:

一类叫做Transformation(转换),延迟加载,它会记录元数据信息,当计算任务触发Action,才会真正开始计算; 一类叫做Action(动作);

一个算子会产生多个RDD

RDD(Resilient Distributed Dataset)叫做分布式数据集,是Spark中最基本的数据抽象,它代表一个不可变、可分区、里面的元素可并行计算的集合。

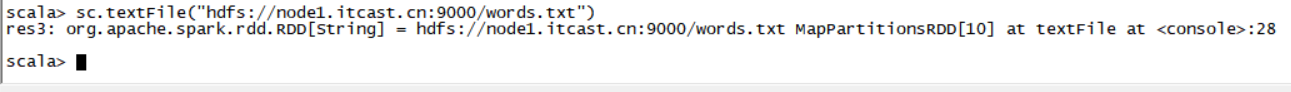

一、RDD创建方式

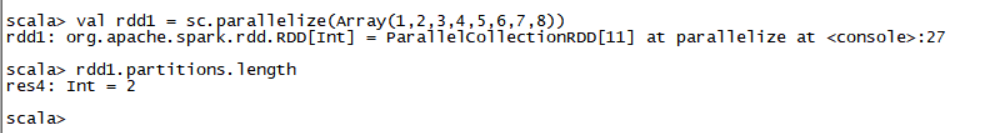

方式一:通过HDFS支持的文件系统系统创建,RDD里没有真正要计算的数据,只是记录了一下元数据  方式二:通过Scala集合或数组以并行化方式创建

方式二:通过Scala集合或数组以并行化方式创建

二、RDD特点

1、一台机器上有多个分区; 2、一个函数会作用到一个分区; 3、RDD之间有一系列依赖; 4、如果是key-value类型,会有分区器; 5、RDD会有一个最佳位置;

三、RDD练习

val rdd1 = sc.parallelize(List(5,6,4,7,3,8,2,9,1,10)) val rdd2 = sc.parallelize(List(5,6,4,7,3,8,2,9,1,10)).map(*2).sortBy(x=>x,true) val rdd3 = rdd2.filter(>10) val rdd2 = sc.parallelize(List(5,6,4,7,3,8,2,9,1,10)).map(*2).sortBy(x=>x+“”,true) val rdd2 = sc.parallelize(List(5,6,4,7,3,8,2,9,1,10)).map(*2).sortBy(x=>x.toString,true)

val rdd4 = sc.parallelize(Array(“a b c”, “d e f”, “h i j”)) rdd4.flatMap(_.split(’ ')).collect

val rdd5 = sc.parallelize(List(List(“a b c”, “a b b”),List(“e f g”, “a f g”), List(“h i j”, “a a b”)))

rdd5.flatMap(.flatMap(.split(" "))).collect

#union求并集,注意类型要一致 val rdd6 = sc.parallelize(List(5,6,4,7)) val rdd7 = sc.parallelize(List(1,2,3,4)) val rdd8 = rdd6.union(rdd7) rdd8.distinct.sortBy(x=>x).collect

#intersection求交集 val rdd9 = rdd6.intersection(rdd7)

val rdd1 = sc.parallelize(List((“tom”, 1), (“jerry”, 2), (“kitty”, 3))) val rdd2 = sc.parallelize(List((“jerry”, 9), (“tom”, 8), (“shuke”, 7)))

#join val rdd3 = rdd1.join(rdd2) val rdd3 = rdd1.leftOuterJoin(rdd2) val rdd3 = rdd1.rightOuterJoin(rdd2)

#groupByKey val rdd3 = rdd1 union rdd2 rdd3.groupByKey rdd3.groupByKey.map(x=>(x._1,x._2.sum))

#WordCount, 第二个效率低 sc.textFile(“/root/words.txt”).flatMap(x=>x.split(" ")).map((,1)).reduceByKey(+).sortBy(.2,false).collect sc.textFile(“/root/words.txt”).flatMap(x=>x.split(" ")).map((,1)).groupByKey.map(t=>(t._1, t._2.sum)).collect

#cogroup val rdd1 = sc.parallelize(List((“tom”, 1), (“tom”, 2), (“jerry”, 3), (“kitty”, 2))) val rdd2 = sc.parallelize(List((“jerry”, 2), (“tom”, 1), (“shuke”, 2))) val rdd3 = rdd1.cogroup(rdd2) val rdd4 = rdd3.map(t=>(t._1, t._2._1.sum + t._2._2.sum))

#cartesian笛卡尔积 val rdd1 = sc.parallelize(List(“tom”, “jerry”)) val rdd2 = sc.parallelize(List(“tom”, “kitty”, “shuke”)) val rdd3 = rdd1.cartesian(rdd2)

#spark action val rdd1 = sc.parallelize(List(1,2,3,4,5), 2)

#collect rdd1.collect

#reduce val rdd2 = rdd1.reduce(+)

#count rdd1.count

#top rdd1.top(2)

#take rdd1.take(2)

#first(similer to take(1)) rdd1.first

#takeOrdered rdd1.takeOrdered(3)

四、高级算子方法

map是对每个元素操作, mapPartitions是对其中的每个partition操作

mapPartitionsWithIndex: 把每个partition中的分区号和对应的值拿出来

val func = (index: Int, iter: Iterator[(Int)]) => { iter.toList.map(x => “[partID:” + index + ", val: " + x + “]”).iterator } val rdd1 = sc.parallelize(List(1,2,3,4,5,6,7,8,9), 2) rdd1.mapPartitionsWithIndex(func).collect

aggregate: 对RDD里元素进行聚合

def func1(index: Int, iter: Iterator[(Int)]) : Iterator[String] = { iter.toList.map(x => “[partID:” + index + ", val: " + x + “]”).iterator } val rdd1 = sc.parallelize(List(1,2,3,4,5,6,7,8,9), 2) rdd1.mapPartitionsWithIndex(func1).collect

###是action操作, 第一个参数是初始值, 二:是2个函数[每个函数都是2个参数(第一个参数:先对个个分区进行合并, 第二个:对个个分区合并后的结果再进行合并), 输出一个参数]

###0 + (0+1+2+3+4 + 0+5+6+7+8+9) rdd1.aggregate(0)(+, +) rdd1.aggregate(0)(math.max(_, _), _ + _)

###5和1比, 得5再和234比得5 --> 5和6789比,得9 --> 5 + (5+9) rdd1.aggregate(5)(math.max(_, _), _ + _)

val rdd2 = sc.parallelize(List(“a”,“b”,“c”,“d”,“e”,“f”),2) def func2(index: Int, iter: Iterator[(String)]) : Iterator[String] = { iter.toList.map(x => “[partID:” + index + “, val: " + x + “]”).iterator } rdd2.aggregate(”")(_ + _, _ + ) rdd2.aggregate(“=”)( + _, _ + _)

val rdd3 = sc.parallelize(List(“12”,“23”,“345”,“4567”),2) rdd3.aggregate(“”)((x,y) => math.max(x.length, y.length).toString, (x,y) => x + y)

val rdd4 = sc.parallelize(List(“12”,“23”,“345”,“”),2) rdd4.aggregate(“”)((x,y) => math.min(x.length, y.length).toString, (x,y) => x + y)

val rdd5 = sc.parallelize(List(“12”,“23”,“”,“345”),2) rdd5.aggregate(“”)((x,y) => math.min(x.length, y.length).toString, (x,y) => x + y)

aggregateByKey:对RDD里元素先分区再聚合

val pairRDD = sc.parallelize(List( (“cat”,2), (“cat”, 5), (“mouse”, 4),(“cat”, 12), (“dog”, 12), (“mouse”, 2)), 2) def func2(index: Int, iter: Iterator[(String, Int)]) : Iterator[String] = { iter.toList.map(x => “[partID:” + index + ", val: " + x + “]”).iterator } pairRDD.mapPartitionsWithIndex(func2).collect pairRDD.aggregateByKey(0)(math.max(_, _), _ + ).collect pairRDD.aggregateByKey(100)(math.max(, _), _ + _).collect

checkpoint

sc.setCheckpointDir(“hdfs://node-1.itcast.cn:9000/ck”) val rdd = sc.textFile(“hdfs://node-1.itcast.cn:9000/wc”).flatMap(.split(" ")).map((, 1)).reduceByKey(+) rdd.checkpoint rdd.isCheckpointed rdd.count rdd.isCheckpointed rdd.getCheckpointFile

coalesce, repartition

val rdd1 = sc.parallelize(1 to 10, 10) val rdd2 = rdd1.coalesce(2, false) rdd2.partitions.length

collectAsMap : Map(b -> 2, a -> 1) val rdd = sc.parallelize(List((“a”, 1), (“b”, 2))) rdd.collectAsMap

combineByKey : 和reduceByKey是相同的效果

###第一个参数x:原封不动取出来, 第二个参数:是函数, 局部运算, 第三个:是函数, 对局部运算后的结果再做运算

###每个分区中每个key中value中的第一个值, (hello,1)(hello,1)(good,1)–>(hello(1,1),good(1))–>x就相当于hello的第一个1, good中的1 val rdd1 = sc.textFile(“hdfs://master:9000/wordcount/input/”).flatMap(.split(" ")).map((, 1)) val rdd2 = rdd1.combineByKey(x => x, (a: Int, b: Int) => a + b, (m: Int, n: Int) => m + n) rdd1.collect rdd2.collect

###当input下有3个文件时(有3个block块, 不是有3个文件就有3个block, ), 每个会多加3个10 val rdd3 = rdd1.combineByKey(x => x + 10, (a: Int, b: Int) => a + b, (m: Int, n: Int) => m + n) rdd3.collect

val rdd4 = sc.parallelize(List(“dog”,“cat”,“gnu”,“salmon”,“rabbit”,“turkey”,“wolf”,“bear”,“bee”), 3) val rdd5 = sc.parallelize(List(1,1,2,2,2,1,2,2,2), 3) val rdd6 = rdd5.zip(rdd4) val rdd7 = rdd6.combineByKey(List(_), (x: List[String], y: String) => x :+ y, (m: List[String], n: List[String]) => m ++ n)

countByKey

val rdd1 = sc.parallelize(List((“a”, 1), (“b”, 2), (“b”, 2), (“c”, 2), (“c”, 1))) rdd1.countByKey rdd1.countByValue

filterByRange

val rdd1 = sc.parallelize(List((“e”, 5), (“c”, 3), (“d”, 4), (“c”, 2), (“a”, 1))) val rdd2 = rdd1.filterByRange(“b”, “d”) rdd2.collect

flatMapValues : Array((a,1), (a,2), (b,3), (b,4)) val rdd3 = sc.parallelize(List((“a”, “1 2”), (“b”, “3 4”))) val rdd4 = rdd3.flatMapValues(_.split(" ")) rdd4.collect

foldByKey

val rdd1 = sc.parallelize(List(“dog”, “wolf”, “cat”, “bear”), 2) val rdd2 = rdd1.map(x => (x.length, x)) val rdd3 = rdd2.foldByKey(“”)(+)

val rdd = sc.textFile(“hdfs://node-1.itcast.cn:9000/wc”).flatMap(.split(" ")).map((, 1)) rdd.foldByKey(0)(+)

foreachPartition

val rdd1 = sc.parallelize(List(1, 2, 3, 4, 5, 6, 7, 8, 9), 3) rdd1.foreachPartition(x => println(x.reduce(_ + _)))

keyBy : 以传入的参数做key val rdd1 = sc.parallelize(List(“dog”, “salmon”, “salmon”, “rat”, “elephant”), 3) val rdd2 = rdd1.keyBy(_.length) rdd2.collect

keys values val rdd1 = sc.parallelize(List(“dog”, “tiger”, “lion”, “cat”, “panther”, “eagle”), 2) val rdd2 = rdd1.map(x => (x.length, x)) rdd2.keys.collect rdd2.values.collect

五、统计网站不同学科前两名

方式一:数据量大的话,可以把数据放硬盘上

方式二:自定义分区,将相同学科放在同一个分区

方式三:数据量大的话,可能会内存溢出

六、计算用户在基站停留时间最长的两个基站

方式一:

方式二; 推荐